FinOps for AI: Tools & Services Considerations

Summary: Practitioners need to understand where a tool sits in the AI lifecycle, be it training, fine-tuning, inference, orchestration, or observability, and evaluate whether it is genuinely necessary, correctly scoped, and worth its cost. Identifying major AI tooling categories, and characterizing what the tools actually do, builds understanding around how to optimize usage and generating potential value.

AI billing details often come wrapped in challenging technical language. Words like “distributed inference,” “KV cache,” or “LoRA adapter” are not meant to confuse, but the quickly-evolving AI space introduces tons of new vocabulary and nuance. The problem is that without understanding where in the AI lifecycle a tool is used, it is more difficult to evaluate whether the spend is justified, right-sized, or duplicating something already paid for.

Use this asset as a map to navigate AI tooling cost and usage. By the end, you should be able to look at any AI tool request and place it in context, understanding what problem it solves, what it costs, and what signals suggest it is being used effectively.

One overarching principle to keep in mind: AI costs compound. Unlike traditional cloud workloads, where over-provisioning wastes money linearly, AI systems often have multiplicative cost structures. A poorly managed training run, an unthrottled inference endpoint, or an agent that loops excessively can generate bills that grow exponentially within hours. AI tooling exists, in large part, to control these compounding risks.

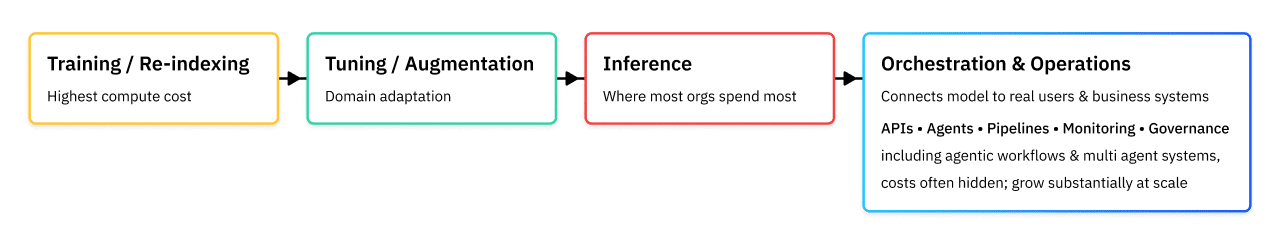

The AI Lifecycle: A Map for FinOps

Understanding this lifecycle prevents a common FinOps mistake: approving tools that solve problems at the wrong phase, or funding infrastructure for phases that were never necessary in the first place.

The Primary Phases

Training/Re-indexing is the most computationally expensive phase, where a model learns from large datasets using massive GPU clusters. Most organizations will never need to train a foundation model from scratch. This is normally the domain of companies like OpenAI, Anthropic, Meta, and Google. If a team is requesting training infrastructure for a general-purpose model, it requires significant scrutiny. The need to train models internally must be driven by a regulatory, compliance, or competitive need that has well-documented, and specific offsetting business benefits.

Tuning / Augmentation is a lighter process where a pre-trained model is adapted to a specific domain or task using a smaller, curated dataset. This can be a legitimate organizational need, particularly in regulated industries or where proprietary data provides a genuine competitive advantage.

Inference is when the trained model is actually used to generate outputs, such as answering a question, writing a document, classifying an image. This is the phase that most organizations spend the most money on, and where the majority of cost optimization levers exist.

Orchestration & Operations encompasses everything that connects the model to real users and business systems: APIs, agents, pipelines, monitoring, and governance. Costs here are often hidden but grow substantially as systems scale. Also in this phase are the elements which allow individual model use to be aggregated into agentic workflows and multi-agent systems.

The next sections discuss the tooling you are likely to see in each of the phases, and provide considerations for their evaluation and use.

Training Phase

Training Infrastructure & Frameworks

Training frameworks like DeepSpeed, unsloth, PyTorch FSDP, MosaicML Composer, Hugging Face Accelerate, and Ray distribute the enormous computational task of training across many GPUs or nodes simultaneously. Without them, training a large model on a single machine would take months or be technically impossible.

These tools primarily address two problems. First, they coordinate how a model’s parameters, gradients, and optimizer states are split across many GPUs, a technique called model sharding, so that no single device runs out of memory. Second, they manage the scheduling and scaling of compute resources, spinning GPUs up and down to match workload demand and minimize idle time.

Idle GPUs are the enemy of training economics. A GPU that is not performing computation costs the same as one that is. Frameworks like DeepSpeed ZeRO and Ray address this by keeping GPUs active and synchronized, and by enabling the use of lower-cost “spot” or preemptible instances (which can be interrupted by the cloud provider) with checkpointing, the ability to save training state and resume after an interruption.

FinOps Considerations

The first question to ask is whether training is necessary at all. Training a foundation model from scratch can cost millions of dollars in compute alone. Figures of $5M to $100M+ are not unusual for frontier models. For the vast majority of enterprise use cases, using a managed model through an API (from OpenAI, Anthropic, Google, or others) is dramatically more cost-effective and should be the default assumption until proven otherwise.

If a team is requesting training infrastructure, demand a clear articulation of why an existing pre-trained model cannot meet the need. Legitimate answers include: extreme data privacy requirements that prevent sending data to a third-party API, highly specialized domains where no existing model performs adequately, or very high inference volumes where owning a model becomes cheaper than paying per-token over time.

When training is genuinely necessary, the key cost levers to ask about are: use of spot/preemptible instances (which can reduce compute costs by 60–80% with proper checkpointing), mixed precision training (FP16 or FP8 instead of FP32, which reduces memory and speeds computation), and whether GPU utilization metrics are being actively monitored. A cluster running at 40% GPU utilization is wasting more than half its spend.

Tuning / Augmentation Phase

Fine-Tuning & Adaptation Tools

Fine-tuning adapts a pre-trained model to a specific task or domain without retraining it from scratch. Parameter-Efficient Fine-Tuning (PEFT) methods, particularly LoRA (Low-Rank Adaptation) and its variants, are the dominant approach. Instead of updating all of a model’s billions of parameters, LoRA inserts small, trainable matrices that represent the changes needed for the new task. The base model stays frozen; only these small adapters are trained and stored.

Tools in this category include Hugging Face PEFT, Weights & Biases, LLaMA-Factory, Axolotl, and MosaicML (now part of Databricks). Fine-tuning runs are considerably cheaper than training, often achievable for hundreds to a few thousand dollars rather than millions. But they still require GPU resources, careful data curation, and evaluation infrastructure.

FinOps Considerations

Fine-tuning is often proposed as the solution when a model is producing poor results in production. Before approving a fine-tuning project, ask whether prompt engineering has been thoroughly attempted first. A well-crafted system prompt, with clear examples of desired behavior, can frequently achieve the same quality improvement as fine-tuning at a fraction of the cost and in hours rather than days or weeks.

When fine-tuning is justified, the FinOps questions center on: the cost of data preparation and labeling (often underestimated and sometimes exceeding the compute cost), the frequency of retraining (a model that needs to be retrained weekly has a very different TCO than one trained once), and the infrastructure required to serve the resulting fine-tuned model versus using a managed API. Serving your own fine-tuned model means taking on the operational costs of inference infrastructure permanently.

Model-Level Optimization

This category covers techniques that make models themselves cheaper to run, without changing the underlying training or serving infrastructure.

Quantization reduces the numerical precision of model weights (for example, from 32-bit floating point to 8-bit or 4-bit integers), shrinking memory requirements and increasing inference speed, usually with minimal quality degradation. Tools like GPTQ, Weights & Biases, AWQ, and llama.cpp implement quantization for open-source models.

Distillation trains a smaller “student” model to mimic the outputs of a larger “teacher” model, producing a model that achieves comparable quality on a specific task at a much lower inference cost. This is how models like GPT-4o Mini and Claude Haiku are positioned. They are smaller, faster, cheaper, and adequate for a wide range of tasks.

Pruning removes redundant parameters or attention heads from a model. Mixture-of-Experts (MoE) architectures used in models like Mistral’s Mixtral activate only a fraction of the model’s parameters for each query, achieving the quality of a large model at the inference cost of a smaller one.

Speculative decoding uses a small draft model to predict multiple tokens at once, which a larger model then verifies in parallel, effectively increasing output throughput without sacrificing quality.

FinOps Considerations

Model selection is one of the highest-leverage cost decisions in any AI system, and it is often made once and revisited infrequently. A common architectural mistake is selecting a frontier model (GPT-4, Claude Opus, Gemini Ultra) for all tasks, when a smaller model handles the majority of queries adequately.

The concept of model routing or cascading is worth understanding. In this pattern, a lightweight model handles easy queries; only when it is insufficiently confident is the query escalated to a larger, more expensive model. This can reduce average inference cost by 60–80% for workloads with a mix of simple and complex queries. Ask whether the team has evaluated model routing as part of their architecture.

When reviewing model choices, request that the team benchmark quality against cost for their specific use case, not against generic benchmarks. A model that ranks poorly on academic benchmarks may be perfectly adequate for a specific enterprise task, at a tenth of the cost.

Caching & Middleware

Caching layers intercept inference requests, check whether an equivalent response has already been computed, and return the cached result if available, bypassing the model entirely or reducing the computation required. This is one of the highest-ROI optimization categories for production AI systems.

Prompt caching (offered natively by Anthropic, OpenAI, and Amazon Bedrock) stores portions of the input context, particularly long system prompts or frequently repeated documents, so they do not need to be reprocessed with every request. This directly reduces input token costs for any workload where significant context is repeated across requests.

Semantic caching (tools include GPTCache by Zilliz, and custom implementations using vector databases) goes further: rather than requiring exact input matches, it identifies queries that are semantically similar to cached queries and returns the cached response. This is particularly valuable for FAQ-style workloads where users ask the same questions in different words.

KV cache is a lower-level optimization within inference servers (like vLLM) that stores the intermediate computation states for the input tokens already processed, so that generating each subsequent output token only requires computing from the last position rather than reprocessing the full context. This has a major impact on the cost of long-context workloads.

FinOps Considerations

Prompt caching deserves immediate attention for any production workload. For applications with long system prompts, detailed instructions, policies, or context documents sent with every request, prompt caching can reduce costs by 50–90% on the cached portion of the input. The mechanism varies by provider: Anthropic’s implementation requires marking cacheable sections explicitly; OpenAI’s applies automatically above a token threshold. This is a low-effort, high-return optimization.

Semantic caching is more complex to implement and maintain but can yield dramatic savings for the right use cases. The critical question is whether your workload has a high rate of semantically similar queries. A customer support bot with 50 frequently asked questions is an excellent candidate. An open-ended research assistant is not. Queries are too varied for cache hit rates to justify the overhead.

When evaluating caching middleware, ask for projected and actual cache hit rates. A caching layer with a 10% hit rate may not justify its implementation and maintenance cost. A 60% hit rate almost certainly does.

Evaluation & Quality Assurance

Knowing whether an AI system is working well, producing accurate, relevant, safe, and useful outputs is a distinct engineering problem from building the system itself. Evaluation tooling provides systematic frameworks for measuring model quality, detecting regressions when models or prompts change, and ensuring compliance with safety and policy requirements.

LLM evaluation frameworks like RAGAS (specifically for RAG systems), DeepEval, Promptfoo, and Braintrust provide automated quality measurement against defined criteria. Human evaluation platforms like Scale AI, Labelbox, and Surge AI coordinate human raters for tasks where automated evaluation is insufficient. Red-teaming and safety evaluation tools help identify failure modes and policy violations before they reach production.

FinOps Considerations

Evaluation is an area where underinvestment has significant indirect cost consequences. An AI system producing poor-quality outputs drives user re-prompting (additional token costs), failed workflows (additional processing costs), and, in high-stakes applications, errors that require human remediation. The cost of a robust evaluation framework is almost always less than the cost of the quality failures it prevents.

Evaluation also has direct relevance to the model selection decision. Before committing to a higher-cost model, require that the team demonstrate through evaluation that the cheaper alternative does not meet quality requirements for the specific use case. Generic benchmarks are insufficient evidence; task-specific evaluation against production-representative data is required.

When models or prompts are updated, regression testing to confirm that quality has not degraded should be automated and treated as a cost control measure, not just a quality control measure. An update that improves one dimension of quality while degrading another, causing more failures and retries, has a real cost impact.

Inference

Inference Serving & Deployment Platforms

Once a model is trained or selected, it needs to be made accessible to applications. Inference serving platforms handle containerization, scaling, load balancing, batching, and monitoring of model endpoints. They abstract away the operational complexity of running models reliably at scale.

Managed inference platforms like Baseten, Fireworks.ai, Together.ai, and Replicate let teams deploy models without building serving infrastructure from scratch. Self-hosted serving frameworks like vLLM, TGI (Text Generation Inference), and NVIDIA Triton Inference Server give more control at the cost of operational overhead.

A critical concept in inference serving is continuous batching: instead of processing requests one at a time, the server groups multiple concurrent requests into a single model pass. This dramatically increases GPU throughput and reduces cost per request. Most production serving frameworks implement this, but not all deployments are configured to take advantage of it.

FinOps Considerations

The core question is whether the organization has the engineering capacity to manage self-hosted serving infrastructure. Self-hosted frameworks can be significantly cheaper at scale, but they require ongoing operational investment: security patching, scaling configuration, monitoring, and incident response. For many organizations, managed platforms represent a better total cost of ownership once engineering time is factored in.

Ask about throughput metrics, not just latency. A serving platform optimized purely for low latency (fast responses for individual requests) may actually have worse GPU utilization and higher cost than one optimized for throughput (maximum requests processed per GPU per second). For internal or asynchronous use cases, higher latency is often perfectly acceptable if it comes with substantially lower cost.

Understand the autoscaling behavior. Scale-up time (how quickly new GPU capacity can be provisioned when demand spikes) determines how much buffer capacity must be maintained at baseline. Slow scale-up means more permanently-on idle capacity; fast scale-up enables tighter provisioning.

Vector Databases & Retrieval (RAG)

Retrieval-Augmented Generation (RAG) is an architectural pattern where, instead of relying solely on what a model learned during training, relevant information is retrieved from an external knowledge base and injected into the prompt at inference time. This allows models to answer questions about data they were never trained on, such as internal company documents, recent events, proprietary product catalogs, without expensive fine-tuning.

Vector databases are the retrieval layer: they store documents as high-dimensional numerical representations (embeddings) that capture semantic meaning, and efficiently find documents most similar to the query. Leading options include Pinecone, Weaviate, Milvus, Qdrant, and Chroma. Managed embedding services from OpenAI, Cohere, and others generate the embeddings themselves.

RAG sits at the intersection of cost optimization and quality: by retrieving only the specific document snippets relevant to a query, it dramatically reduces the number of tokens that need to be sent to the model compared to including entire documents or knowledge bases in the prompt.

FinOps Considerations

RAG infrastructure has costs that are frequently underestimated in initial proposals: embedding generation (which must be done for all documents at ingestion, and re-done when documents change), vector database storage and query costs, and the ongoing maintenance of the retrieval pipeline. Understand whether these are one-time or recurring costs.

Ask about retrieval quality — specifically, whether the system is retrieving the right documents, not just any documents. Poor retrieval quality typically manifests as increased hallucination rates, which in turn drives user dissatisfaction, repeat queries, and potentially costly errors in downstream workflows. A vector database that is fast and cheap but returns irrelevant results is a false economy.

For smaller, static knowledge bases (a few hundred to a few thousand documents), full RAG infrastructure may be unnecessary. Simpler keyword search, or even including all relevant context in a long context window (many frontier models now support 100K–1M tokens), may be more cost-effective when factored against the engineering investment of building and maintaining a RAG pipeline.

AI Gateway & API Management

As organizations use multiple AI models from multiple providers, managing API keys, rate limits, routing, fallbacks, and cost allocation across those providers becomes operationally complex. AI gateway tools sit in front of model APIs and provide a unified control plane.

OpenRouter provides a unified API that routes requests to dozens of different model providers, enabling model switching without application code changes. LiteLLM is an open-source library and proxy that translates between different provider API formats. Kong and Apigee are enterprise API gateways that can be extended for AI traffic management. Native gateway offerings from cloud providers like Amazon Bedrock, Azure AI Foundry, and Google Vertex AI provide similar capabilities within their respective ecosystems.

These tools enable capabilities like: automatic fallback to a backup model when a primary provider experiences downtime, rate limiting to prevent runaway costs, load balancing across providers for reliability, and centralized logging of all AI API traffic.

FinOps Considerations

AI gateway tooling is particularly valuable for organizations using three or more different AI models or providers. Below that threshold, the operational overhead of running a gateway may exceed its benefits. Above it, the lack of a gateway typically means fragmented visibility, duplicated API key management, and no ability to enforce spend controls centrally.

The cost transparency use case is compelling: a gateway that logs every API call with metadata (which team made the call, which application, which model, input and output token counts) provides the raw data needed for accurate AI cost allocation. This is foundational FinOps infrastructure for organizations at scale.

Ask whether the gateway supports budget guardrails creating the ability to stop or alert when a team’s or application’s spending reaches a threshold. In environments without guardrails, a misconfigured agent or a suddenly viral feature can generate unexpected bills before anyone notices.

Orchestration & Operations

Hardware & Compute Platforms

This category encompasses the actual silicon and cloud compute that powers AI workloads. The primary options are:

Cloud GPU instances from AWS (P4, P5, P6, G-series), Azure (NC, ND-series), and GCP (A2, A3 instances) running NVIDIA H100, H200, or B200 GPUs. These are the workhorses of both training and inference.

Inference-optimized accelerators like AWS Inferentia2 and Google TPU v5e are purpose-built for running inference workloads at lower cost per token than general-purpose GPUs. They sacrifice some flexibility for significant cost efficiency.

Competitor GPU hardware including AMD MI300X is increasingly viable and often priced more aggressively than NVIDIA equivalents, though software ecosystem maturity remains a consideration.

Serverless and on-demand GPU platforms like Modal, RunPod, Lambda Labs, and Vast.ai offer pay-per-second GPU access without long-term commitments, making them suitable for intermittent workloads.

FinOps Considerations

The fundamental question with compute is whether the hardware class matches the workload. Over-provisioning is endemic in AI: teams often request H100s (the highest-tier GPU) for workloads that run perfectly well on L40S or A10G GPUs at a fraction of the cost. Understand the model size being served. A 7-billion-parameter model does not need the same hardware as a 70-billion-parameter model.

Reservation and commitment-based pricing deserves attention for sustained workloads. On-demand GPU instances can cost two to three times as much as one- or three-year reserved instances. However, reservations are a significant commitment, and AI infrastructure needs change rapidly. A sensible approach is to reserve baseline capacity and use on-demand or serverless for burst or experimental work.

For inference workloads specifically, ask whether autoscaling to zero is implemented. A GPU server running idle overnight costs the same as one serving traffic. Serverless platforms that scale to zero are often meaningfully cheaper for workloads with variable or low traffic, even at a higher per-second cost when active.

Orchestration & Agent Frameworks

As AI systems grow more complex, they often involve multiple models working in sequence or in parallel, calling external tools and APIs, making decisions based on intermediate results, and maintaining state across extended workflows. Orchestration frameworks provide the scaffolding for these multi-step, multi-model pipelines.

Agent frameworks like LangChain, LlamaIndex, AutoGen, and CrewAI provide abstractions for defining agents: AI systems that can plan, reason, use tools, and take actions to accomplish goals. Workflow orchestrators like Prefect, Airflow, and Temporal handle the scheduling, error handling, and state management of AI pipelines alongside traditional data workflows.

Kubernetes with KubeFlow, Slurm, and Ray serve as the underlying compute orchestration layer, managing how GPU and CPU resources are allocated across distributed AI workloads.

FinOps Considerations

Agent frameworks are where AI costs can become unpredictable most quickly. An agent that iterates through reasoning, taking an action, observing the result, reasoning again, generates LLM calls (and therefore tokens) at every step. An agent that loops unexpectedly, or that is given an underspecified goal, can generate hundreds or thousands of LLM calls before producing a result or failing. Budget controls and maximum iteration limits are not optional safeguards; they are essential cost controls.

Understand the cost multiplier effect of agentic systems. A single user interaction that triggers an agent might result in 5, 10, or 50 separate model API calls, each with its own token cost and each accumulating context window overhead. The FinOps visibility challenge is acute here: your billing dashboard will show API costs, but without tracing instrumentation it is very difficult to connect those costs back to specific user interactions or agent runs.

Ask whether the team has implemented tracing and cost attribution at the agent level creating the ability to see exactly how many tokens a specific agent run consumed, across all model calls. Tools like LangSmith, Arize Phoenix, and Weights & Biases provide this visibility. Without it, cost anomalies in agentic systems are nearly impossible to diagnose.

Observability, Monitoring & FinOps-Specific Tooling

This is the category that FinOps practitioners should care about most. And it is frequently the last one funded. AI observability tools provide visibility into what models are doing, how much it costs, what quality the outputs are, and where the money is going.

LLM observability platforms like Arize Phoenix, LangSmith, Weights & Biases (W&B), Helicone, and PromptLayer capture traces of every LLM call including the prompt sent, the response received, the token counts, the latency, and the cost. This data is essential for debugging quality issues and for understanding cost drivers.

FinOps-specific tools like Vantage, CloudZero, Apptio, and emerging AI-specific platforms like Pay-i provide cost allocation, anomaly detection, and unit economics dashboards for AI workloads. They bridge the gap between raw cloud billing data and the business-level view of cost per use case.

Infrastructure monitoring via Grafana, Datadog, and Prometheus provides GPU utilization, queue depths, and system health metrics that inform rightsizing and capacity planning decisions.

FinOps Considerations

There is a common pattern in AI cost overruns: costs grow, the team cannot explain why, and the investigation requires days of manual log analysis to produce an answer. Observability tooling exists precisely to prevent this. It should be treated as a non-negotiable component of any production AI system, not an optional add-on.

The key metrics to insist on having visibility into are: cost per use case (not just total spend), token consumption breakdown (input vs. output, cached vs. uncached, by model), GPU utilization (idle time is wasted money), and error rates and retry rates (repeated calls due to errors are pure waste).

AI FinOps tooling also enables chargeback and showback, attributing AI costs to the specific teams, products, or business units generating them. Without this attribution, AI costs sit in a shared pool with no accountability, and the teams generating the most costs have no incentive to optimize.

Data Pipelines & Feature Stores

AI systems are only as good as the data that feeds them. Data pipelines ingest, transform, validate, and deliver the data that trains models, populates vector databases, and provides context during inference. Feature stores cache and serve the computed representations of data (features) that models consume.

Tools in this category include Apache Kafka and Confluent for real-time data streaming, dbt and Apache Spark for data transformation, Feast and Tecton for feature store management, and Airflow and Prefect for pipeline orchestration.

Storage costs are a significant and growing portion of AI TCO that is easy to overlook. Training datasets, model checkpoints, embedding stores, inference logs, and evaluation datasets all accumulate over time. At scale, storage for AI can rival compute as a cost driver.

FinOps Considerations

Data pipelines are where AI costs bleed into traditional cloud costs in ways that are difficult to track. A data pipeline that reprocesses the same documents daily when only a small fraction have changed is generating avoidable compute and storage costs. Understand the incremental processing model: are pipelines designed to process only new or changed data, or do they reprocess everything on each run?

Embedding costs deserve specific attention in RAG architectures. Every document in the knowledge base must be converted to an embedding, and that embedding must be recomputed whenever the document changes or whenever the embedding model is upgraded. For large knowledge bases (millions of documents), this can be a significant recurring cost. Ask how frequently re-embedding is triggered and why.

Where Tool Costs Appear

Tooling Costs in any of these phases may appear in a variety of cost and usage sources: direct public cloud services, direct subscriptions with vendors (SaaS model), cloud marketplace purchases, as new or existing SKUs on existing enterprise agreements with other software companies, in the expense reports of employees who sign up for subscriptions or trials (shadow IT again!) or others. The cost reporting also may not take into account the “free” or open source tooling that may be selected or used. Working with service management, ITAM or security teams may also identify tooling that is in use (important for security and configuration purposes) but not yet costing the organization monetarily.

The Build vs. Buy vs. Managed Service Decision

Tools have a spectrum of options ranging from fully managed (pay a vendor to run it for you) to fully self-hosted (build and operate it yourself). The right answer varies by product and organizational context, but a few principles apply broadly.

Managed services have a higher unit cost but lower total cost of ownership when engineering capacity is constrained or when the underlying technology changes rapidly. Inference serving, for example, is a domain where the open-source tooling is advancing very quickly. A team that builds custom serving infrastructure may find it obsolete within a year.

Self-hosted tools are cost-effective at scale but require sustained engineering investment in operations, security, and maintenance. A vector database that a team self-hosts to save $500 per month may require $5,000 per month in engineering time to operate reliably.

The hidden cost of undifferentiated engineering is significant. Every hour an AI engineering team spends operating infrastructure is an hour not spent on the product capabilities that differentiate the business. FinOps practitioners should factor this into tool evaluations, even when it is difficult to quantify precisely.

The Real Unit of Measure: Use Case Economics

Individual tools and their costs are only part of the picture. The most important FinOps concept for AI systems is use case economics: the total cost of achieving a specific business outcome, measured per unit of that outcome.

Examples of use case units: cost per customer query resolved, cost per document summarized, cost per code review completed, cost per sales call analyzed. These metrics connect AI spend to business value in a way that raw token counts or GPU-hours never can.

Use case economics are sensitive to almost every category of tool discussed in this guide. A change in the inference serving configuration, a new caching layer, a switch to a smaller model, or a more efficient RAG pipeline can each dramatically shift the cost per unit. This means that use case costs are not static. They require ongoing measurement and should be a standard component of any AI program review.

FinOps practitioners should push teams to define their use case unit early, instrument for it, and report on it regularly. “We spent $50,000 on AI last month” is not useful information. “We resolved 200,000 customer queries at an average cost of $0.25 per query, down from $0.40 last quarter” is actionable.

AI Tool Lifecycle Management

Approving an AI tool is not the end of a FinOps engagement, it is the beginning. The cost dynamics of AI systems change faster than almost any other technology category. Models are updated, architectures evolve, usage patterns shift, and new optimization techniques emerge continuously. A system that was well-optimized six months ago may be significantly over-spending (or obsolete) today.

The questions to establish at approval time for any tool or system element, and to revisit regularly, are:

Visibility: Can we see the cost of this system broken down by use case, team, and model? Can we tag its cost or what mechanisms are in place for allocation? Are there alerts for anomalous spend?

Accountability: Which team owns the cost of this system? Do they have both the visibility and the authority to optimize it? Will they be responsible for deprecating it when no longer required?

Optimization cadence: How frequently is the team reviewing and acting on cost optimization opportunities? Is there a regular process for evaluating whether newer, cheaper models meet quality requirements?

Exit criteria: If the cost per unit outcome exceeds a defined threshold, what is the plan? Is there an alternative approach, a fallback model, or a decision point at which the use case is reconsidered?

FinOps for AI tooling is ultimately a collaboration between procurement, finance, operations, and engineering. No single team has all the information needed to manage these costs well.

FinOps practitioners bring the financial discipline and the organizational authority to ask hard questions. Engineers bring the technical understanding of what the tools actually do and where the levers are. The organizations that manage AI costs most effectively are the ones that make this collaboration a regular, structured practice—not a reactive conversation after an unexpected bill arrives.

Acknowledgments

We’d like to thank the following people for their work on this Paper: